This is an archived version of the Machine Learning Lab website for documentation purposes. The host is in no way affiliated with the University of Freiburg, its Faculty of Engineering or any of its members.

Reinforcement Controllers in Technical Applications

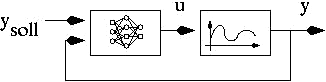

The idea of Reinforcment Learning controllers in feedback control loops is extremely attractive: The designer only specifies the target value(s) - the controlling agent learns to fulfill this goal incrementally from the experience of success or failure. With the approach of using neural networks as the basis of such a reinforcement learning agent, we could show that these controllers are able to successfully learn in very complex and nonlinear control situations.

- Reinforcement learning in automotive engines: The idea of this project that was done in cooperation with a German automobile company, was to learn to control the complex behavior of a combustion engine only by the information of success or failure. The learning agent had to control both fuel (injection time) and air (throttle angle). We successfully established a learning system based on a multi-agent approach using neural networks. It was able to learn to control the engine quickly and accurately by simultaneously taking care of important constraints.

- Reinforcement learning for thermostat control: The problem of heating a fluid can be formulated as a reinforcement learning problem: The goal is successfully achieved, if the target fluid reaches the desired temperature. The problem here is that the process of heating can be extremely slow and show considerable delays. Therefore, a controller has to be very accurate in its decisions, in order to fulfill the desired exactness.

Publications

- M. Riedmiller and R. Schoknecht. Einsatzmoeglichkeiten neuronaler Regler im Automobilbereich. Diploma thesis, Proceedings of the VDI-GMA Aussprachetag 1998, Berlin, March 19

- M. Riedmiller. High quality thermostat control by reinforcement learning - a case study. Proceedings of the Conald Workshop 1998, Carnegie-Mellon-University, 1998.

Contact

For more information on this research project, please contact Martin Riedmiller.